GSA SER Verified Lists Vs Scraping

Understanding the Core Difference

When building links with GSA Search Engine Ranker, efficiency is everything. Two dominant approaches fuel its campaigns: importing pre-made verified lists and generating fresh targets through real-time scraping. The keyword "GSA SER verified lists vs scraping" represents a fundamental fork in the road that every serious user eventually faces. While both methods feed the engine with places to post, the underlying logic, cost, speed, and long-term value could not be more different. Grasping this split is the first step toward campaigns that build rankings instead of wasting resources.

What Is a Verified List in GSA SER?

A verified list is a static file containing URLs where GSA SER has already successfully submitted a link. These lists are typically sold, shared, or exported after aggressive testing. They come pre-sorted by platform type, such as WordPress comments, guestbooks, article directories, or forums. Each entry already passed at least one round of registration and posting, meaning the engine skips the guesswork and goes straight to link creation. Proponents love them because they eliminate the most CPU-intensive part of the workflow: footprint detection, engine detection, and captcha solving just to find a postable form. In a head-to-head "GSA SER verified lists vs scraping" discussion, verified lists offer instant gratification and predictable success rates during the first run.

The Illusion of Freshness

The critical weakness sits in the word "verified." A platform that worked thirty days ago might now be offline, moderated, flagged as spam, or have changed its CMS entirely. Live campaigns that rely solely on imported lists seep into a decay curve. You end up burning proxies, threads, and retry attempts on dead URLs. Despite promises of daily updates, few list providers can truly refresh tens of thousands of targets every 24 hours. The result is a constant, hidden bleed of efficiency that scrapers avoid by fetching only currently alive endpoints.

How Real-Time Scraping Works

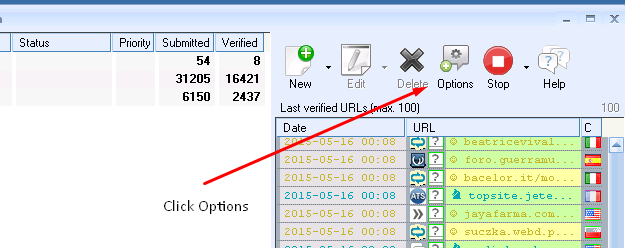

Scraping in GSA SER instructs the software to hunt for targets on the fly using search engines. By querying Google, Bing, or custom engines with keyword footprints like "powered by WordPress" or "add new comment," the engine harvests live URLs directly from the index. Because it pulls pages that are genuinely online at that moment, the verification rate skyrockets. The ser engine control panel lets you blend custom footprints with platform identifiers, ensuring that freshly indexed, low-competition targets enter the queue minutes after Google discovers them. In the "GSA SER verified lists vs scraping" debate, scraping represents the aggressive, dynamic choice that refuses to live off stale breadcrumbs.

Footprint Quality and Search Engine Limits

The scraping side isn't free of headaches. Search engines aggressively throttle and ban IPs that send automated queries. You need a robust, rotating proxy setup or the "Use proxies for scraping" option with deep pocket backups. Poorly built footprints also drag in terabytes of junk results, flooding your temporary folder with irrelevant pages. Skill lies in crafting laser-focused search strings that isolate login-free, low-anti-spam platforms. When a footprint goes viral, scrapers see diminishing returns because competitors hammer the same search phrases. This is where verified lists sometimes hold an edge, because the targets inside them are not being simultaneously discovered by every other scrape-happy user in real time.

Why "GSA SER verified lists vs scraping" Matters for Campaign Velocity

Velocity dictates how many verified links you build per hour. Verified lists bypass the hunting phase entirely, often allowing software to jump straight past captcha solving and into the final submission. For tier-2 and tier-3 link blasts where raw volume outweighs nuanced quality, this can double or triple output on the same hardware. Pure scraping, conversely, requires the engine to download, analyze, and attempt registration on each freshly scraped URL. The process eats CPU cycles and memory. Many power users solve this by deploying a hybrid: they scrape daily into a master database, verify in bulk overnight, and then feed the now-verified scraped targets into a "list mode" for high-speed posting the following day.

Cost Considerations and Hidden Expenses

Purchasing verified lists can seem cheaper at first glance—often a one-time payment or a monthly subscription for a curated set. Yet the downtime spent on dead URLs, wasted captcha credits, and proxy bandwidth billing against futile retries can quietly eclipse the list price. Scraping shifts cost to proxy infrastructure and search engine API consumption. If you route scraping through residential proxies or expensive backconnect networks, a month of aggressive searching can outpace a year's worth of list subscriptions. Evaluating "GSA SER verified lists vs scraping" without measuring your monthly captcha and proxy bills is incomplete; a list riddled with heavy reCaptcha platforms will drain captcha balances faster than freshly scraped forums with simple text-based questions.

Platform Diversity and Footprint Rot

A healthy link profile demands diverse platforms, not just a tsunami of WordPress blogs. Verified lists often pool around the easiest engines that rarely change—like certain comment scripts and link directories. Scraping, when done correctly with varied footprints, can pull image comments, trackbacks, wikis, and obscure CMS platforms that lists never cover. These oddball platforms frequently have lower spam scores because fewer SEOs target them. Scrapers can pivot on Monday to target newly patched forum software while list users wait for the next email update. That agility is priceless when search engines start devaluing yesterday's gold mines.

When to Choose Each Method

If you manage a single money site with a tight, curated tier-1 layer, hand-picked verified lists from reputable sellers can safeguard your domain from the chaotic randomness of scraped comment sections. Importing a small, vetted list of editorial-quality targets aligns with white-hat ideals. For a heavy-duty churn-and-burn project or a tier-3 buffer that needs millions of links, pure scraping or scrape-then-verify pipelines keep the funnel filled without relying on third parties. The "GSA SER verified lists vs scraping" answer often lies in your risk tolerance: lists inherit someone else’s research, while scraping puts the entire universe of indexable platforms at your own command.

Combining Both for Maximum Throughput

Advanced GSA SER practitioners rarely treat this as an either/or decision. They fold verified lists into their scraping ecosystem. By setting up a project that prioritizes the verified list for immediate link drops while constantly scraping in the background, the software can top up the queue with fresh blood. If the verified list fails five times in a row on a dead domain, the engine simply moves on and pulls a brand-new URL from the freshly scraped bucket. This hybrid model neutralizes the "GSA SER verified lists vs scraping" conflict and turns both into parallel weapons. You get the speed of pre-verified targets plus the inexhaustible supply of machine-discovered opportunities, all governed by a retry logic that abandons junk without mercy.

Final Takeaways

The keyword "GSA SER verified lists vs scraping" points to a decision that shapes your entire link-building pipeline. Verified lists deliver instant scale but rot silently. Scraping offers living, breathing targets but demands technical prowess and constant proxy nourishment. Measure both against your specific campaign goals, hardware limits, and the quality expectations of your tier structure. A static list of 50,000 targets might build 5,000 live links today and only 200 a week later. A well-tuned scraping setup can build 3,000 live links today, 3,000 tomorrow, and 3,000 next year. Neither approach is universally superior, but understanding the decay curve and the cost of freshness allows you to architect a setup that burns cash on progress instead of pretending that yesterday’s URLs are still listening.

check here